VIDEO SOLUTIONS

Artificial Intelligence in Video Surveillance

Current products on the market promise high accuracy and efficient, fast searching and detection.

One of the goals of AI with video surveillance is to limit the amount of continual human monitoring needed, allowing users to react to events.

PHOTO COURTESY OF UMBO COMPUTER VISION

AI-enabled facial recognition analytics can be useful for a variety of vertical markets.

PHOTO COURTESY OF ANYVISION

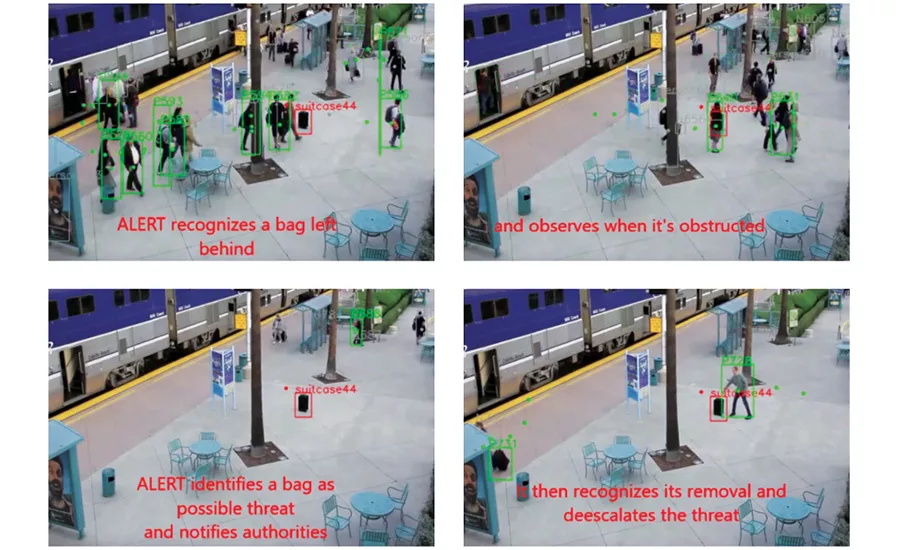

Detection of unattended objects are useful for airports, mass transit and other locations.

PHOTO COURTESY OF IRVINE SENSORS

Unusual motion detection, such as crowds of people in a restricted area, can be learned for an application.

PHOTO COURTESY OF AVIGILON

Though many projects have been proven with pilots and real-case deployments, artificial intelligence (AI) in the security industry is in the relatively early stages and its role in video surveillance is as varied as the vertical markets that implement it. The term itself still has a lot of noise surrounding it and many in the industry shy away from using AI — opting instead for more specific characteristics to define their technology. Integrators must sift through all the noise to find appropriate products for themselves and their customers. To begin, it’s important to understand what’s currently available in the marketplace.

“Today, there are more cameras and recorded video than ever before, which means security operators are faced with the challenge of keeping pace. AI is a technology that can help overcome this challenge as it doesn’t get bored and can analyze more video data than humans ever possibly could. It is designed to bring the most important events and insight to users’ attention, freeing them to do what they do best: make critical decisions,” explains Dr. Mahesh Saptharishi, CTO and senior vice president, Avigilon, Vancouver, B.C., Canada. According to Saptharishi, the company’s AI-powered video management software called Avigilon Control Center (ACC) automates the detection function of surveillance and removes the need for operators to constantly watch video screens. The company also has a deep learning search tool called Avigilon Appearance Search, which allows users to initiate a search using a physical descriptor for results across one or multiple sites within seconds.

Specific applications — or narrow AI as some experts refer to it — are the biggest products in play for video surveillance right now, because as much as we’d like computers to think completely on their own, they still must be trained to do a specific task and learn from what is fed to them. While AI is the general term used in the industry, machine learning or intelligent analytics, along with more specific types of machine learning, are more apropos terms, say industry experts.

In a general sense, “machine learning allows programmers to enable a computer to assess and alter its computational processes through training,” says Sean Lawlor, data scientist at Genetec, Montreal, Quebec, Canada.

The type of machine learning or AI technology that a company uses may serve as a differentiator. At Genetec, the company’s AutoVu automatic license plate recognition (ALPR) system uses a form of supervised machine learning called deep learning or deep neural networks (DNN). “Working with deep learning, we are training our algorithms using a structured dataset of raw LPR images and a limited set of possible classes or outputs,” Lawlor explains. “Our current offering contains DNN classifiers that are very efficient at reading characters, rejecting bad reads and recognizing a license plate’s state of origin.”

What Questions Should Security Integrators Ask?

Security experts recommend asking end users the following questions to determine the right AI video solution for an application:

- How large is the existing video surveillance infrastructure (how many cameras, how many locations, etc.)?

- What specific problems are you trying to find a solution for?

- What is the level of event activity?

- What accuracy levels do you expect for the application?

- Do you want real-time analysis or detection, easier and faster post-recording search, or both?

Distinguishing Technologies

Chris Johnston, regional marketing manager for Bosch Security and Safety Systems, Fairport, N.Y., says that deep learning and machine learning are two related but different forms of AI. “Machine learning is a way of training an algorithm by feeding it huge amounts of data as a method of training it to adjust itself to improve its performance. Deep learning is a different, more complex form of machine-learning-based artificial neural networks (ANNs), which actually mimic the structure of the human brain,” he explains. He says that each ANN has distinct layers (each layer picks out a specific feature such as curve or edge in an image) with connections to other neurons. The more layers, the deeper the learning.

Bosch’s lines of IP 7000, 8000, and 9000 series cameras include the company’s latest version of intelligent video analytics, which adapt to conditions such as changes in lighting or environment such as rain, snow, clouds or leaves blowing in the wind. In addition, a few pilot projects are already in place with Bosch’s new firmware version (to be officially released this year) that includes an AI/machine-learning algorithm at the edge.

Looking for quick answers on security topics? Try Ask SDM, our new smart AI search tool. Ask SDM →

San Francisco-based BrainChip uses a type of AI for its BrainChip Studio and Accelerator offerings called a spiking neural network. The technology enables facial classification on partial faces and forensic searches. Bob Beachler, senior vice president of marketing and business development at the company, says that type of AI allows the surveillance system to train instantaneously, without large data sets. “It can train on a single image,” he says.

“A spiking neural network is modeling the characteristics of the human neurons. Deep learning is about a multiple layer convolutional network. We do similar multiple layer neural networks, but we don’t use the convolutions. We use spikes and synapses to model the neuron functions. It’s a type of neural network and a type of machine learning,” Beachler explains.

BrainChip’s facial classification can be used for blacklisted or restricted persons at schools, campuses, hotels or hospitals, or for identifying suspects or a person of interest for which the end user will not have facial biometric key points for identification. A forensic search can identify a logo or pattern on a t-shirt and quickly search large amounts of video footage for relevant images, Beachler says.

With its enterprise-level ALERT solution, Irvine Sensors Corp. of Costa Mesa, Calif., uses a type of deep learning technology combined with saliency-based processing to emulate part of how the brain learns and works for event awareness and real-time alerting, according to Jim Justice, vice president of video analytics division on the commercial side. ALERT uses multi-processing GPUs on top of multi-core CPUs and machine learning for applications such as unattended baggage, perimeter breaches, people counting or crowd formation, and critical detection of complex scenarios such as thrown, dropped or launched objects. Smart cities and other large organizations can use the company’s real-time threat analysis in large deployments with thousands of cameras and accuracy rates between 97 percent and 98 percent, according to Dan DeBlasio, director of ALERT business development at the neural science video analytics company.

Justice says understanding exactly what the end user wants to solve is paramount to picking the appropriate technologies. “Do they care about dropped objects, people climbing fences or going in forbidden areas? Those salient events are what become the driver for how the integrator should select and deploy the appropriate system,” he says.

Deploying AI

Aside from the different types of machine learning used for AI in video surveillance, there are also different avenues of deployment, including on the edge (i.e., the camera) or on the backend (i.e., the server), and on the physical network or through the cloud.

IC Realtime’s ella, for example, is a cloud-based, deep learning video search engine that comes with a plug-in network device that requires little in the way of programming or set up, according to Brian Levy, vice president of Agoura Hills, Calif.-based integrator company SOV Security. The solution can find images quickly and is able to recognize colors, objects, people, vehicles, animals and more. “You can teach ella that a certain body frame of a car is a Ford truck and she can then find all the Ford trucks, distinguishing among others. She understands and she’ll notify the end user based on those requirements,” Levy shares.

Do AI-Powered Video Analytics Require Additional Programming, Installation or Maintenance?

The short answer is: it varies. Some solutions are truly plug-and-play extensions to an existing video surveillance system with programming done before the installation, but they may be limited in their functions. Other solutions, particularly large-scale solutions or those with many application possibilities, require more training or interaction from the security integrator and/or end user.

“Environments vary and that can be a complex thing,” says Jim Justice of Irvine Sensors Corp. “The developer initially trains the system, and at the time of deployment the training is typically adequate for the end user’s job. After that, if requirements change, then some additional training is required for the system to be able to respond to that.”

For AI solutions at the edge, power and bandwidth limitations are important considerations for the integrator, and can impact installation. “If you want to depend on the cloud, regular internet bandwidth won’t cut it for high-activity applications when something meaningful happens all the time, since bandwidth will be in short supply,” says Brian Levy of SOV Security. “Standard internet just isn’t fast enough yet for more than a few cameras.”

Some manufacturers that offer AI-powered edge solutions allow users to stream video only in critical situations or alarm events to limit the amount of video sent, according to Chris Johnston of Bosch Security and Safety Systems.

Notwithstanding deployment avenue, bandwidth and power considerations, for the most part, say security experts, integrators still sell and install an AI-powered video solution the same way as any surveillance package. Says Sean Lawlor of Genetec, “Of course it depends on the system, but mostly they still require the same tuning, and the same camera and lighting adjustments to get the best results possible.”

SOV Security has deployed ella at diverse locations from hospitals to houses. Levy says that artificial intelligence appeals to a variety of vertical markets right now for different, specific video applications. “With a true machine learning product, a customer uses it and gives the system feedback and it actually gets smarter and better at what it’s doing,” Levy explains.

Agent Video Intelligence (Agent Vi), also offers artificial intelligence on the cloud as a software as a service (SaaS). The company offers its innoVi Edge device, which allows any IP camera to connect to the company’s centrally managed cloud-based analytics. “It enables customers to get the best total cost of ownership and also the best support. We can deploy the same solution on customer premises if they don’t want to be on the public cloud,” shares Zvika Ashani, co-founder and CTO of Agent Vi, Rosh HaAyin, Israel. The company does both real-time and post-recording search using AI, though it focuses on real-time, Ashani says. The innoVi algorithm can be used to detect specific colors or differentiate between specific types of vehicles, such as motorcycles, buses or bicycles.

From an integrator’s perspective, Ashani says, an AI-based system is easy to set up because it is machine learning and fully automated, with most of the deep learning done off site before deployment. “Secondly, an integrator and end user will notice a difference in accuracy. There should be much more accurate results, a higher detection rate and a lot less false alarms than traditional analytics. These are the biggest points of impact,” he says.

While some current AI-powered video surveillance products are powered via server or already installed on a camera, others are “infrastructure agnostic,” meaning they can be deployed on any existing surveillance device, including a drone, wearable, fixed camera or VMS. This is the case for AnyVision’s plug-and-play technology for applications such as facial, body and object recognition, according to Max Constant, chief commercial officer and head of Americas at AnyVision, New York.

The company’s AI surveillance solutions are used in casinos, banks and other permanent locations, according to Constant, but the tactical solution can be used anywhere without existing camera infrastructure: think of a camera on a temporary pole. “It takes our platform and makes it a mobile solution for incident response or unstructured environments,” he says.

Getting Results

Though it’s important for an integrator to understand the type of AI or machine learning being used in a particular solution, it’s also important to consider speed, accuracy levels and scalability. “If the system takes three months to get to an 80 percent accuracy, you have to know if that will be sufficient for the end user. If it takes six months, well that may be too long,” says Shawn Guan, CEO of Umbo Computer Vision, San Francisco, Calif.

According to Guan, Umbo’s autonomous AI platform for video surveillance sees accuracy levels of 95 percent with many customers. The AI platform is designed to monitor human behaviors, and the platform offers extensions to allow end users to add other vendor’s analytics such as facial recognition. The solution is built on a virtual private cloud infrastructure that has a centralized master AI platform that looks at all events from all customers to optimize its algorithms and rapidly increase learning, according to Guan. “The end goal of autonomous AI is that you really don’t need a human monitoring videos anymore. They simply monitor the events and react to those events,” he explains.

But experts in this space say that while AI technology has been proven to deliver great results for targeted solutions, the damage done by over-promised or less-than-worthy solutions remains a challenge right now. “The early days of video analytics led to unacceptable false alert rates and we’ve been fighting that problem ever since,” Justice says.

“That’s where deep learning and AI, properly tuned, can really have an impact. They can become really targeted and limit that noise of false alerts,” DeBlasio says.

As for the future, most experts expect more products to come to market as well as years of continual technological improvements. “I think we will see an explosion of more machine learning projects in all markets, but I still think general adoption is several years away,” Levy says. The good news, he says, “is the security industry is the kind of market that just cries out for this type of solution. It is useful, and the technology will just get better, faster and smarter.”

More Online

For more information about artificial intelligence used in the security industry, visit SDM’s website, where you will find the following articles:

“Get Smart About AI in Security”

www.SDMmag.com/get-smart-about-ai

“A New Wave of Video Analytics”

www.SDMmag.com/new-wave-video-analytics

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!